If you’re not on the SecurityMetrics.org mailing list you missed an interaction about the Privacy Rights Clearinghouse Chronology of Data Breaches data source started by Lance Spitzner (@lspitzner). You’ll need to subscribe to the list see the thread, but one innocent question put me down the path to taking a look at the aggregated data with the intent of helping folks understand the overall utility/efficacy of it when trying to craft messages from it.

Before delving into the data, please note that PRC does an excellent job detailing source material for the data. They fully acknowledge some of the challenges with it, but a picture (or two) is worth a thousand caveats. (NOTE: Charts & numbers have been produced from January 20th, 2013 data).

The first thing I did was try to get a feel for overall volume:

Total breach record entries across all years (2005-present): 3573

Number of entries with ‘Total Records Lost’ filled in: 751

% of entries with ‘Total Records Lost’ filled in: 21.0%

Takeaway #1: Be very wary of using any “Total Records Breached” data from this data set.

It may help to see that computation broken down by reporting source over the years that the data file spans:

This view also gives us:

Takeaway #2: Not all data sources span all years and some have very little data.

However, Lance’s original goal was to compare “human error” vs “technical hack”. To do this, he combined DISC, PHYS, PORT & STAT into one category (accidental/human :: ACC-HUM) and HACK, CARD & INSD into another (malicious/attack :: MAL-ATT). Here’s what that looks like when broken down across reporting sources across time:

(click to enlarge)

This view provides another indicator that one might not want to place a great deal of faith on the PRC’s aggregation efforts. Why? It’s highly unlikely that DatalossDB had virtually no breach recordings in 2011 (in fact, it’s more than unlikely, it’s not true). Further views will show some other potential misses from DatalossDB.

Takeaway #3: Do not assume the components of this aggregated data set are complete.

We can get a further feel for data quality and also which reporting sources are weighted more heavily (i.e. which ones have more records, thus implicitly placing a greater reliance on them for any calculations) by looking at how many records they each contributed to the aggregated population each year:

(click to enlarge)

I’m not sure why 2008 & 2009 have such small bars for Databreaches.net and PHIPrivacy.net, and you can see the 2011 gap in the DatalossDB graph.

At this point, I’d (maybe) trust some aggregate analysis of the HHS (via PHI), CA Attorney General & Media data, but would need to caveat any conclusions with the obvious biases introduced by each.

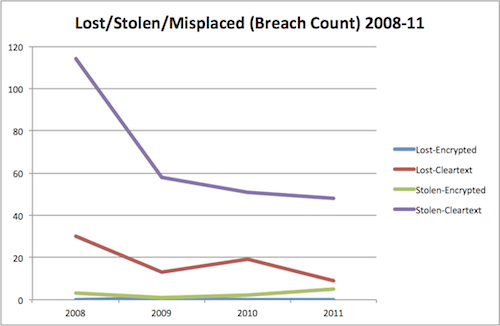

Even with these issues, I really wanted a “big picture” view of the entire set and ended up creating the following two charts:

(click to enlarge)

(click to enlarge)

(You’ll probably want to view the PDF documents of each : [1] [2] given how big they are.)

Those charts show the number of breaches-by-type by month across the 2005-2013 span by reporting source. The only difference between the two is that the latter one is grouped by Lance’s “meta type” definition. These views enable us to see gaps in reporting by month (note the additional aggregation issue at the tail end of 2010 for DatalossDB) and also to get a feel for the trends of each band (note the significant increase in “unknown” in 2012 for DatalossDB).

Takeaway #4: Do not ignore the “unknown” classification when performing analysis with this data set.

We can see other data issues if we look at it from other angles, such as the state the breach was recorded in:

(click to enlarge)

We can see at least three issues (missing value and occurrences recorded not in the US) from this view, but it seems the number of breaches mostly aligns with population (discrepancies make sense given the lack of uniform breach reporting requirements).

It’s also very difficult to do any organizational analysis (I’m a big fan of looking at “repeat offenders” in general) with this data set without some significant data cleansing/normalization. For example, all of these are “Bank of America“:

[1] "Bank of America"

[2] "Wachovia, Bank of America, PNC Financial Services Group and Commerce Bancorp"

[3] "Bank of America Corp."

[4] "Citigroup, Inc., Bank of America, Corp."

Without any cleansing, here are the orgs with two or more reported breaches since 2005:

(apologies for the IFRAME but Google’s Fusion Tables are far too easy to use when embedding data tables)

Takeaway #5: Do not assume that just because a data set has been aggregated by someone and published that it’s been scrubbed well.

Even if the above sets of issues were resolved, the real details are in the “breach details” field, which is a free-form text field providing more information on who/what/when/where/why/how (with varying degrees of consistency & completeness). This is actually the information you really need. The HACK attribute is all well-and-good, but what kind of hack was it? This is one area VERIS shines. What advice are you going to give financial services (BSF) orgs from this extract:

(click to enlarge)

HACKs are up in 2012 from 2010 & 2011, but what type of HACKs against what size organizations? Should smaller orgs be worried about desktop security and/or larger orgs start focusing more on web app security? You can’t make that determination without mining that free form text field. (NOTE: as I have time, I’m trying to craft a repeatable text analysis function I can perform on that field to see what can be automatically extracted)

Takeaway #6: This data set is pretty much what the PRC says it is: a chronology of data breaches. More in-depth analysis is not advised without a massive clean-up effort.

Finally, hypothesizing that the PRC’s aggregation could have resulted in duplicate records, I created a subset of the records based solely on breach “Date Made Public” + “Organization Name” and then sifted manually through the breach text details, 6 duplicate entries were found. Interestingly enough, only one instance of duplicate records was found across reporting databases (my hunch was that DatalossDB or DataBreaches.NET would have had records other, smaller databases included; however, this particular duplicate detection mechanism does not rule this out given the quality of the data).

Conclusion/Acknowledgements

Despite the criticisms above, the efforts by the PRC and their sources for aggregation are to be commended. Without their work to document breaches we would only have the mega-media-frenzy stories and labor-intensive artifacts like the DBIR to work with. Just because the data isn’t perfect right now doesn’t mean we won’t get to a point where we have the ability to record and share this breach information like the CDC does diseases (which also ins’t perfect, btw).

I leave you with another column of numbers that shows—if broken down by organization type and breach type—there is an average of 2 breaches per-breach/org-type-per-year (according to this data):

(The complete table includes the mean, median and standard deviation for each type.)

Lance’s final question to me (on the list) was “Bob, what do recommended as the next step to answer the question – What percentage of publicly known data breaches are deliberate cyber attacks, and what percentage are human based accidental loss/disclosure?”

I’d first start with a look at the DBIR (especially this year’s) and then see if I could get a set of grad students to convert a complete set of DatalossDB records (and, perhaps, the other sources) into VERIS format for proper analysis. If any security vendors are reading this, I guarantee you’ll gain significant capital/accolades within/from the security practitioner community if you were to sponsor such an effort.

Comments, corrections & constructive criticisms are heartily welcomed. Data crunching & graphing scripts available both on request and perhaps uploaded to my github repository once I clean them up a bit.